The randomized Kaczmarz method is a method for solving systems of linear equations:

(1) ![]()

- Sample a random row index

with probability

with probability  .

. - Update to enforce the equation

holds exactly:

holds exactly:

Throughout this post,![Rendered by QuickLaTeX.com \[x_{t+1} \coloneqq x_t + \frac{b_{i_t} - a_{i_t}^\top x_t}{\norm{a_{i_t}}^2} a_{i_t}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-99cc99e6de2ca367d24ee6f20e610735_l3.png)

denotes the

denotes the  th row of

th row of  .

.

What selection probabilities ![]() should we use? The answer to this question may depend on whether the system (1) is consistent, i.e., whether it possesses a solution

should we use? The answer to this question may depend on whether the system (1) is consistent, i.e., whether it possesses a solution ![]() . For this post, we assume (1) is consistent; see this previous post for a discussion of the inconsistent case.

. For this post, we assume (1) is consistent; see this previous post for a discussion of the inconsistent case.

The classical selection probabilities for randomized Kaczmarz were proposed by Strohmer and Vershynin in their seminal paper:

(1) ![]()

(2) ![]()

Surprisingly, to me at least, the simpler strategy (2) often works better than (1). Here is a simple example. Define a matrix ![]() with entries

with entries ![]() , and choose the right-hand side

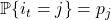

, and choose the right-hand side ![]() with standard Gaussian random entries. The convergence of standard RK with sampling rule (1) and uniform RK with sampling rule (2) is shown in the plot below. After a million iterations, the difference in final accuracy is dramatic: the final relative error 0.00012 was uniform RK and 0.67 for standard RK!

with standard Gaussian random entries. The convergence of standard RK with sampling rule (1) and uniform RK with sampling rule (2) is shown in the plot below. After a million iterations, the difference in final accuracy is dramatic: the final relative error 0.00012 was uniform RK and 0.67 for standard RK!

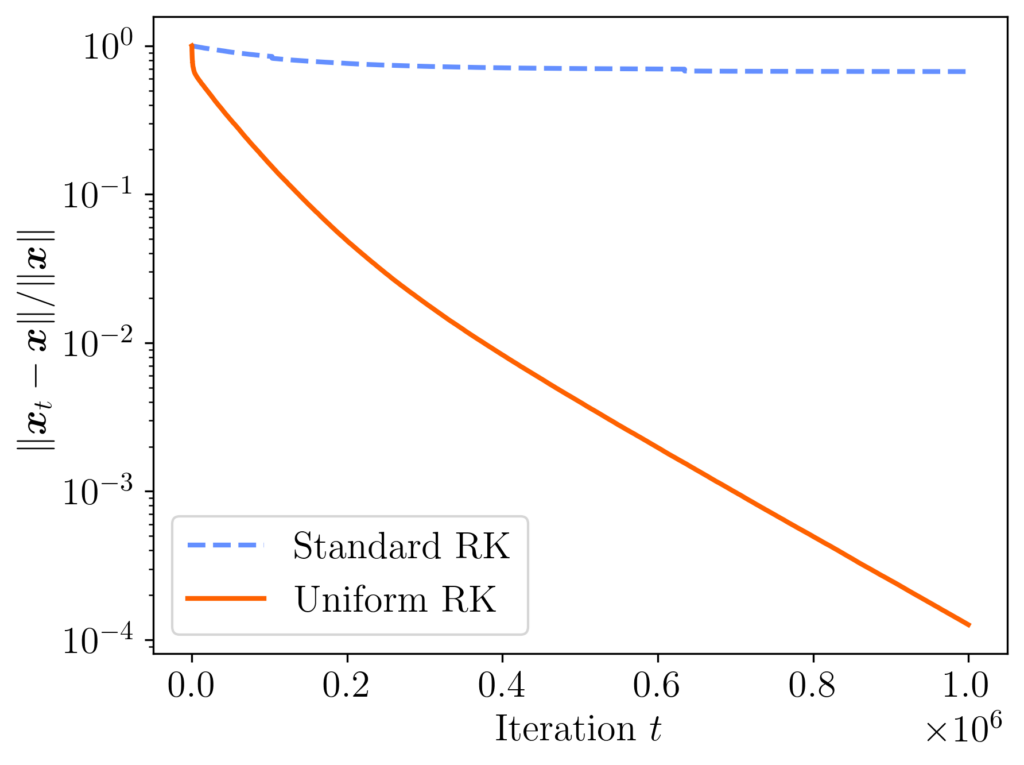

In fairness, uniform RK does not always outperform standard RK. If we change the matrix entries to ![]() , then the performance of both methods is similar, with both methods ending with a relative error of about 0.07.

, then the performance of both methods is similar, with both methods ending with a relative error of about 0.07.

Another experiment, presented in section 4.1 of Strohmer and Vershynin’s original paper, provides an example where standard RK converges a bit more than twice as fast as uniform RK (called “simple RK” in their paper). Still, taken all together, these experiments demonstrate that standard RK (sampling probabilities (1)) is often not dramatically better than uniform RK (sampling probabilities (2)), and uniform RK can be much better than standard RK.

Randomized Kaczmarz Error Bounds

Why does uniform RK often outperform standard RK? To answer these questions, let’s look at the error bounds for the RK method.

The classical analysis of standard RK shows the method is geometrically convergent

(3) ![]()

(4) ![Rendered by QuickLaTeX.com \[\kappa_{\rm dem}(A) = \frac{\norm{A}_{\rm F}}{\sigma_{\rm min}(A)} = \sqrt{\sum_i \left(\frac{\sigma_i(A)}{\sigma_{\rm min}(A)}\right)^2} \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-824557fbf90de5be0f9756f9089728aa_l3.png)

What about uniform RK? Let ![]() denote a diagonal matrix containing the inverse row norms, and introduce the row-equilibrated matrix

denote a diagonal matrix containing the inverse row norms, and introduce the row-equilibrated matrix ![]() . The row-equilibrated matrix

. The row-equilibrated matrix ![]() has been obtained from

has been obtained from ![]() by rescaling each of its rows to have unit norm.

by rescaling each of its rows to have unit norm.

Uniform RK can then be related to standard RK run on the row-equilibrated matrix:

Fact (uniform sampling and row equilibration): Uniform RK on the system

produces the same sequence of (random) iterates

as standard RK applied to the row-equilibrated system

.

Therefore, by (3), the iterates ![]() of uniform RK satisfy the bound

of uniform RK satisfy the bound

(5) ![]()

Row Equilibration and the Condition Number

Does row equilibration increase or decrease its condition number? What is the optimal way of scaling the rows of a matrix to minimize its condition number? These are classical questions in numerical linear algebra, and they were addressed in a classical 1969 paper of van der Sluis. These results were then summarized and generalized in Higham’s delightful monograph Accuracy and Stability of Numerical Algorithms. Here, we present answers to these questions using a variant of van der Sluis’ argument.

First, let’s introduce some more concepts and notation. Define the spectral norm condition number

![]()

Our first result shows us that row equilibration never hurts the Demmel condition number by much. In fact, the row-equilibrated matrix produces a nearly optimal Demmel condition number when compared to any row scaling:

Theorem 1 (Row equilibration is a nearly optimal row scaling). Let

be wide

and full-rank, and let

denote the row-scaling of

to have unit row norms. Then

By scaling the rows of a square or wide matrix to have unit norm, we bring the Demmel condition number to within a ![]() factor of the optimal row scaling. In fact, we even bring the Demmel condition number to within a

factor of the optimal row scaling. In fact, we even bring the Demmel condition number to within a ![]() factor of the optimal spectral norm condition number for any row scaling.

factor of the optimal spectral norm condition number for any row scaling.

Since the convergence rate for randomized Kaczmarz is ![]() , this result shows that implementing randomized Kaczmarz with uniform sampling yields to a convergence rate that is within a factor of

, this result shows that implementing randomized Kaczmarz with uniform sampling yields to a convergence rate that is within a factor of ![]() of the optimal convergence rate using any possible sampling distribution.

of the optimal convergence rate using any possible sampling distribution.

This result shows us that row equilibration can’t hurt the Demmel condition number by much. But can it help? The following proposition shows that it can help a lot for some problems.

Proposition 2 (Row equilibration can help a lot). Let

be wide

and full-rank, and let

denote the maximum ratio between two row norms:

Then the Demmel condition number of the original matrix

satisfies

Moreover, for every

, there exists a matrix

where this bound is nearly attained:

Taken together, Theorem 1 and Proposition 2 show that row equilibration often improves the Demmel condition number, and never increases it by that much. Consequently, uniform RK often converges faster than standard RK for square (or short wide) linear systems, and it never converges much slower.

Proof of Theorem 1

We follow Higham’s approach. Each of the ![]() rows of

rows of ![]() each have unit norm, so

each have unit norm, so

(7) ![]()

![]()

(8) ![]()

![]()

![]()

![]()

![]()

Proof of Proposition 2

Write ![]() . Using the Moore–Penrose pseudoinverse again, write

. Using the Moore–Penrose pseudoinverse again, write

(10) ![]()

![]()

![]()

![]()

To show this bound is nearly obtained, introduce ![]() . Then

. Then ![]() with

with ![]() and

and

![]()

![]()

Practical Guidance

What does this theory mean for practice? Ultimately, single-row randomized Kaczmarz is often not the best algorithm for the job for ordinary square (or short–wide) linear systems, anyway—block Kaczmarz or (preconditioned) Krylov methods have been faster in my experience. But, supposing that we have locked in (single-row) randomized Kaczmarz as our algorithm, how should we implement it?

This question is hard to answer, because there are examples where standard RK and uniform RK both converge faster than the other. Theorem 1 suggests uniform RK can require as many as ![]() more iterations than standard RK on a worst-case example, which can be a big difference for large problems. But, particularly for badly row-scaled problems, Proposition 2 shows that uniform RK can dramatically outcompete standard RK. Ultimately, I would give two answers.

more iterations than standard RK on a worst-case example, which can be a big difference for large problems. But, particularly for badly row-scaled problems, Proposition 2 shows that uniform RK can dramatically outcompete standard RK. Ultimately, I would give two answers.

First, if the matrix ![]() has already been carefully designed to be well-conditioned and computing the row norms is not computationally burdensome, then standard RK may be worth the effort. Despite this theory suggesting it can do quite badly, it took a bit of effort to construct a simple example of a “bad” matrix where uniform RK significantly outcompeted standard RK. (On most examples I constructed, the rate of convergence of the two methods were similar.)

has already been carefully designed to be well-conditioned and computing the row norms is not computationally burdensome, then standard RK may be worth the effort. Despite this theory suggesting it can do quite badly, it took a bit of effort to construct a simple example of a “bad” matrix where uniform RK significantly outcompeted standard RK. (On most examples I constructed, the rate of convergence of the two methods were similar.)

Second, particularly for the largest systems where you only want to make a small number of total passes over the matrix, expending a full pass over the matrix to compute the row norms is a significant expense. And, for poorly row-scaled matrices, sampling using the squared row norms can hurt the convergence rate. Based on these observations, given a matrix of unknown row scaling and conditioning or given a small budget of passes over the matrix, I would use the uniform RK method over the standard RK method.

Finally, let me again emphasize that the theoretical results Theorem 1 and Proposition 2 only apply to square or wide matrices ![]() . Uniform RK also appears to work for consistent systems with a tall matrix, but I am unaware of a theoretical result comparing the Demmel condition numbers of

. Uniform RK also appears to work for consistent systems with a tall matrix, but I am unaware of a theoretical result comparing the Demmel condition numbers of ![]() and

and ![]() that applies to tall matrices. And for inconsistent systems of equations, it’s a whole different story.

that applies to tall matrices. And for inconsistent systems of equations, it’s a whole different story.

Edit: After initial publication of this post, Mark Schmidt shared that the observation that uniform RK can outperform standard RK was made nearly ten years ago in section 4.2 of the following paper. They support this observation with a different mathematical justification

![Rendered by QuickLaTeX.com \[\kappa_{\rm dem} = \frac{\norm{A}_{\rm F}}{\sigma_{\rm min}(A)} = \sqrt{\sum_i \left(\frac{\sigma_i(A)}{\sigma_{\rm min}(A)}\right)^2} \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-0a01bce740f28deced2d1a8b4e6e1002_l3.png)

![Rendered by QuickLaTeX.com \[D \coloneqq \diag\left( \sqrt{\frac{p_j}{p_j^{\rm RK}}} : j =1,\ldots,n\right).\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-bc930770f7b4e20afffe903a4ab372ba_l3.png)

![Rendered by QuickLaTeX.com \[x_{t+1} = \left( I - \frac{a_{i_t}^{\vphantom{\top}}a_{i_t}^\top}{\norm{a_{i_t}}^2} \right)x_t + \frac{b_{i_t}a_{i_t}}{\norm{a_{i_t}}^2}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-cccfb54762bb990499c96331e7019309_l3.png)

![Rendered by QuickLaTeX.com \begin{align*}\expect_{i_t}[x_{t+1}] &= \sum_{j=1}^n \left[\left( I - \frac{a_j^{\vphantom{\top}} a_j^\top}{\norm{a_j}^2} \right)x_t + \frac{b_ja_j}{\norm{a_j}^2}\right] \prob\{i_t=j\}\\ &=\sum_{j=1}^n \left[\left( \frac{\norm{a_j}^2}{\norm{A}_{\rm F}^2}I - \frac{a_j^{\vphantom{\top}} a_j^\top}{\norm{A}_{\rm F}^2} \right)x_t + \frac{b_ja_j}{\norm{A}_{\rm F}^2}\right].\end{align*}](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-0a7f7b3bfad51b7094348db94d2d4254_l3.png)

![Rendered by QuickLaTeX.com \[\expect_{i_t}[x_{t+1}] = \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right) x_t + \frac{A^\top b}{\norm{A}_{\rm F}^2}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-de4ded17daf001dde92c26025e9c14f3_l3.png)

![Rendered by QuickLaTeX.com \[\expect[x_{t+1}] = \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right) \expect[x_t] + \frac{A^\top b}{\norm{A}_{\rm F}^2}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-24c5bfe6c1ea70293055deb68a3df117_l3.png)

![Rendered by QuickLaTeX.com \[\expect[x_t] = \left[\sum_{i=0}^{t-1} \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^i \right]\cdot\frac{A^\top b}{\norm{A}_{\rm F}^2}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-eb47c96091b1187b53c318589b696693_l3.png)

![Rendered by QuickLaTeX.com \[\left(\frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^{-1} = \sum_{i=0}^\infty \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^i. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-8d1be156303c168d2de83dbde76e44d6_l3.png)

![Rendered by QuickLaTeX.com \[\left(\frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^{-1} = \sum_{i=0}^{t-1} \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^i + \sum_{i=t}^\infty \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^i.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-08f4b1a705439315fe0fc038e059d01c_l3.png)

![Rendered by QuickLaTeX.com \[\expect[x_t] = x_\star - \left[\sum_{i=t}^\infty \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^i \right]\cdot\frac{A^\top b}{\norm{A}_{\rm F}^2}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-d973041dba49ba471bbfb6b3d4c2b463_l3.png)

![Rendered by QuickLaTeX.com \[\expect[x_t] - x_\star = - \left(I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^t \left[\sum_{i=0}^\infty \left( I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^i \right]\cdot\frac{A^\top b}{\norm{A}_{\rm F}^2}.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-8238e65e7b7df2013504f2a6f71799e6_l3.png)

![Rendered by QuickLaTeX.com \[\expect[x_t] - x_\star = - \left(I - \frac{A^\top A}{\norm{A}_{\rm F}^2}\right)^t x_\star.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-3cbe72663f8ef13c762213b5592bb5d2_l3.png)

![Rendered by QuickLaTeX.com \[\norm{\expect[x_t] - x_\star}^2 \le \norm{I - \frac{A^\top A}{\norm{A}_{\rm F}^2}}^{2t} \norm{x}_\star^2. \]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-e663370119bd02900da0aa4f7b6faf03_l3.png)

![Rendered by QuickLaTeX.com \[I - \frac{A^\top A}{\norm{A}_{\rm F}^2} = I - V \cdot\frac{\Sigma^2}{\norm{A}_{\rm F}^2} \cdot V^\top = V \left( I - \frac{\Sigma^2}{\norm{A}_{\rm F}^2}\right)V^\top.\]](https://www.ethanepperly.com/wp-content/ql-cache/quicklatex.com-468566feb9b39f9e0dd616b47f324ad5_l3.png)